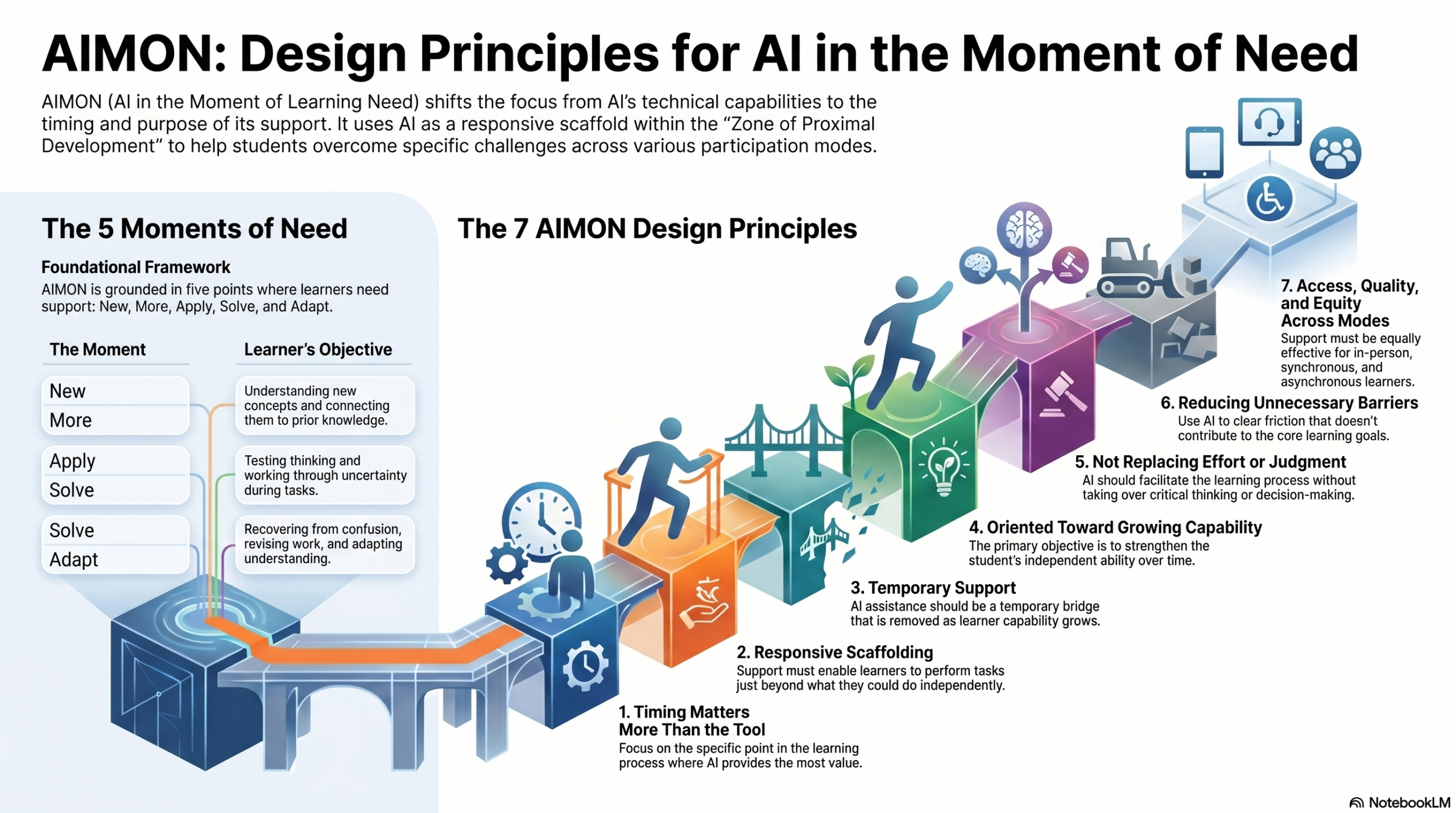

In the first post in this series, I introduced the idea of AI in the Moment of Learning Need (AIMON) framework as a way of thinking about AI not as a general-purpose tool, but as a form of support that should be aligned to specific points in the learning process.

That shift from tool to timing matters.

But timing alone is not enough. If AI is to support meaningful learning rather than simply help students complete tasks, its use needs to be intentionally designed. Without that design, even well-intentioned use can drift toward convenience, overuse, or misalignment with what we actually want students to learn.

In this post, I outline a set of design principles that can guide the use of AI in ways that support learning across the course experience. These are not rigid rules. They are design commitments: ways of thinking that can help align AI use with how learning actually happens.

Care to listen to an overview? Here is a short (5 min) debate on the principles created using NotebookLM based on this blog post.

Principle 1: Align AI Use to Moments of Learning Need

One of the most common patterns I see in current practice in higher education for both faculty and students is the use of AI as a general-purpose tool, available at any time, for almost any task. While that flexibility can be appealing, it often leads to use that is convenient rather than instructionally meaningful.

AIMON begins with a different premise: AI use should be aligned to specific moments in the learning process when students are most likely to benefit from targeted support.

In a typical course, these moments are not random. They tend to cluster around recognizable phases in the learning arc: 1) when students are first trying to understand expectations, 2) when they are working through unfamiliar or complex ideas, 3) when they are applying concepts, 4) when they are revising their work, and 5) when they are evaluating their own understanding. Each of these moments presents a different kind of learning need, and therefore calls for a different kind of support.

Designing AI use around these moments helps shift the focus from what the tool can do to what the learner needs right now. This is a subtle but important move. It positions AI not as a constant presence, but as a form of scaffolding introduced at points where it can extend the learner’s capability. In this sense, AI can help support learners as they move within their Zone of Proximal Development (Vygotsky, 1978), providing assistance that helps them progress beyond what they could do independently while still requiring them to engage in the underlying thinking.

There is also a cognitive dimension to this alignment. When students encounter new or complex material, their working memory can become overloaded, making it difficult to process and retain information effectively. Well-timed support, whether from an instructor, a peer, or an AI tool, can help manage that load by clarifying concepts, offering examples, or breaking down tasks into more manageable parts. This idea is consistent with principles from Cognitive Load Theory, which emphasize the importance of structuring support to match the learner’s current capacity (Sweller, 1988).

A simple way to begin applying this principle is to look at your course not as a sequence of assignments, but as a sequence of learning moments. Where do students typically hesitate, struggle, explore, or revise? Those points in the learning arc are where AI support is most likely to be useful, and where its use can be designed intentionally rather than left to chance.

Principle 2: Align AI Use to Learning Outcomes

Even when AI use is well-timed, it can still miss the mark if it is not clearly connected to what students are expected to learn.

This principle is straightforward but essential: AI use should be explicitly aligned to intended learning outcomes and the type of learning work students are expected to do.

In practice, this means asking a simple but often overlooked question: What kind of thinking should students be doing here? If the goal is conceptual understanding, then AI might help students explain ideas in their own words or compare alternative interpretations. If the goal is application, AI might support scenario exploration or problem framing. If the goal is evaluation, AI might prompt critique or reflection.

What AI should not do is perform the very thinking that the assignment is meant to develop.

This distinction helps guard against a common failure mode in current AI use: tasks are completed successfully, but learning is shallow or bypassed entirely. By anchoring AI use to learning outcomes, we keep the focus on the development of knowledge and skills, not just the production of artifacts.

Principle 3: Use AI to Scaffold Reasoning, Not Replace It

Closely related to alignment is the role AI is expected to play.

In AIMON, AI is most effective when it is used to scaffold reasoning processes rather than generate finished artifacts.

This builds directly on the concept of scaffolding introduced in the previous post. Support should help learners move forward in their thinking, not remove the need for thinking altogether. When AI is used to generate complete responses, drafts, or solutions without requiring engagement, it can short-circuit the very processes that lead to learning.

By contrast, when AI is used to:

* explain a concept in a different way

* generate examples for comparison

* ask follow-up questions

* highlight gaps or inconsistencies

it supports the learner’s reasoning without replacing it.

This principle also connects to broader work on self-regulated learning, which emphasizes the importance of learners actively monitoring, evaluating, and directing their own thinking. Self-Regulated Learning AI can support that process, but only if it is designed to prompt and extend it, rather than bypass it. (Shunk & Greene, 2018)

Principle 4: Make Boundaries and Expectations Explicit

One of the most consistent challenges faculty report is uncertainty, both their own and their students’, about what constitutes appropriate AI use.

This leads to a simple but critical principle: boundaries, transparency, and citation practices must be explicitly articulated.

When expectations are unclear, students will fill in the gaps themselves, often in ways that do not align with instructional intent. Some will avoid AI entirely out of caution. Others will use it in ways that undermine their learning. Neither outcome is desirable.

Clear guidance can include:

* when AI use is encouraged, optional, or restricted

* what kinds of support are appropriate

* how AI contributions should be acknowledged or cited

The goal is not to control every use, but to provide enough structure that students can make informed decisions about how to engage with these tools in ways that support their learning.

Principle 5: Preserve Learner Agency with Non-AI Options

As AI tools become more integrated into learning environments, it is important to ensure that their use remains a choice, not a requirement.

This principle emphasizes that non-AI alternatives should exist to preserve learner agency and support equitable participation.

Students come to our courses with different levels of access, experience, and comfort with AI tools. Some may have ethical concerns. Others may simply prefer to work without them. Designing learning experiences that allow for meaningful participation with or without AI helps ensure that students are not disadvantaged by their choice.

This is particularly relevant in flexible and multimodal learning environments, where students may already be navigating different participation conditions. Providing parallel pathways, where the learning goals are the same, but the means of support can vary, helps maintain both rigor and inclusivity.

Principle 6: Position AI Outputs as Provisional and Critique-able

One of the more subtle but important design considerations is how AI-generated content is framed.

In AIMON, AI outputs should be positioned as provisional and critique-able rather than authoritative.

Students can easily interpret AI responses as correct, complete, or final—especially when those responses are fluent and confident. If left unexamined, this can discourage critical thinking and reinforce passive acceptance.

By contrast, when AI output is treated as:

* a starting point

* a perspective to evaluate

* a draft to refine

* a claim to verify

it becomes a resource for thinking rather than a replacement for it.

This principle aligns with constructivist perspectives on learning, where knowledge is actively constructed through engagement, questioning, and reflection rather than simply received. (Duffy & Cunningham, 1996)

Principle 7: Design for Access Across Participation Contexts

Finally, AI-supported learning activities should be designed with attention to the different contexts in which students engage.

AI-supported activities should provide meaningful learning support across participation contexts, helping ensure access, quality, and equity.

Students do not all encounter learning opportunities at the same time, in the same place, or in the same way. (This is a core consideration in multimodal and HyFlex course design (Beatty, 2019).) Some may have immediate access to instructors or peers. Others may be working independently, asynchronously, or under constraints that limit interaction.

Well-designed AI support can help bridge some of these gaps by providing:

* timely explanations

* opportunities for guided practice

* feedback during drafting and revision

When aligned with the other principles above, this kind of support can help create more consistent learning experiences without requiring uniform participation conditions.

Why This Works

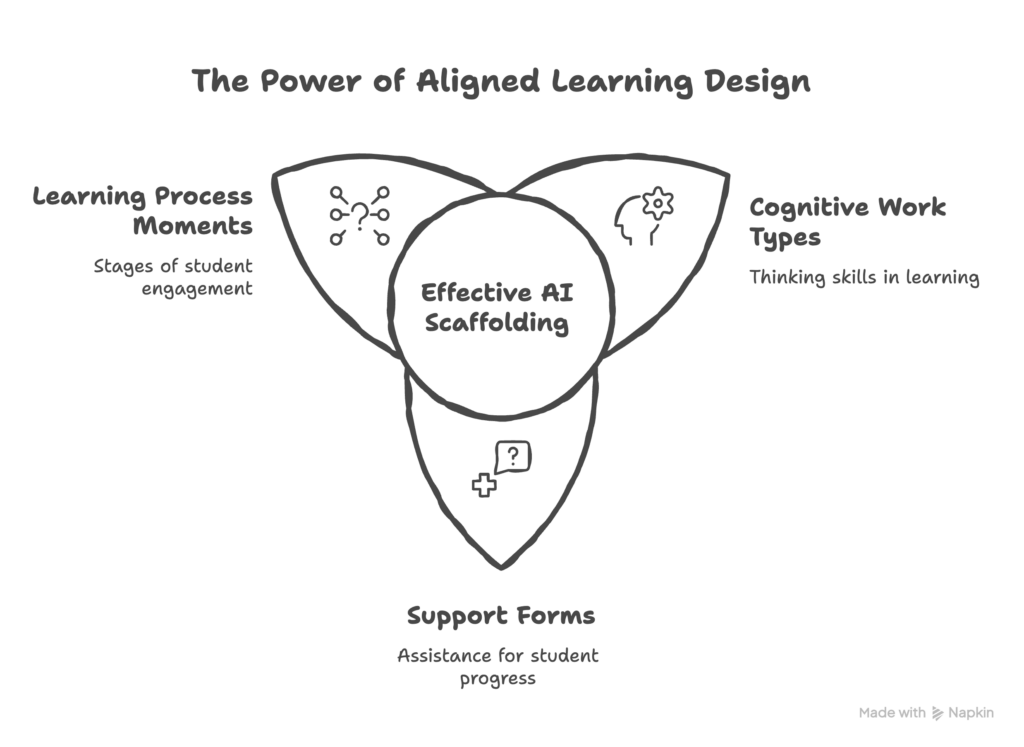

Taken together, these principles align three critical elements of learning design:

The moment in the learning process: Learning unfolds over time, and students encounter different kinds of needs as they move through a course. By attending to these moments, when students are uncertain, exploring, applying, or reflecting, we can introduce support when it is most likely to matter. This helps ensure that AI is used purposefully rather than continuously or by default.

The type of cognitive work required: Not all learning tasks demand the same kind of thinking. Some require explanation and sense-making, others application, evaluation, or synthesis. Designing AI use with this in mind helps preserve the intellectual work we want students to do, rather than allowing the tool to take over that work.

The form of support provided: Support can take many forms, from clarification and examples to feedback and prompts for reflection. When AI is used as scaffolding, it offers assistance that helps students move forward without removing the need for engagement. Over time, this kind of support can contribute to deeper understanding and greater independence.

When these elements are aligned, AI can function as an effective scaffold, reducing unnecessary barriers while preserving the thinking that leads to learning. When they are not aligned, AI use tends to drift toward task completion at the expense of learning.

The goal of AIMON is not to increase the use of AI, but to improve the design of its use so that it contributes meaningfully to learning.

Try this in your course

Choose one assignment or activity in your course.

* Where are students most likely to hesitate, struggle, explore, or need feedback

* What kind of thinking do you want them to do at that moment?

* How might a carefully designed AI interaction support that thinking without replacing it?

Looking Ahead

In the next post, I’ll begin working through the learning arc, starting with the earliest phase: how to support students as they get started in a course, reduce uncertainty, and build initial momentum without reducing their responsibility for engaging with the work.

References

Beatty, B. J. (2019). Hybrid-flexible course design: Implementing student-directed hybrid classes. EdTech Books. https://edtechbooks.org/hyflex/

Duffy, T. M., & Cunningham, D. J. (1996). Constructivism: Implications for the design and delivery of instruction. In D. H. Jonassen (Ed.), Handbook of research for educational communications and technology (pp. 170–198). Macmillan.

Schunk, D. H., & Greene, J. A. (Eds.). (2018). Handbook of self-regulation of learning and performance (2nd ed.). Routledge. https://doi.org/10.4324/9781315697048

Sweller, J. (1988). Cognitive load during problem solving: Effects on learning. Cognitive Science, 12(2), 257–285. https://doi.org/10.1207/s15516709cog1202_4

Vygotsky, L. S. (1978). Mind in society: The development of higher psychological processes. Harvard University Press.

Wood, D., Bruner, J. S., & Ross, G. (1976). The role of tutoring in problem solving. Journal of Child Psychology and Psychiatry, 17(2), 89–100. https://doi.org/10.1111/j.1469-7610.1976.tb00381.x

Author

-

View all posts

View all postsDr. Brian Beatty is Professor of Instructional Design and Technology in the Department of Equity, Leadership Studies and Instructional Technologies at San Francisco State University. At SFSU, Dr. Beatty pioneered the development and evaluation of the HyFlex course design model for blended learning environments, implementing a “student-directed-hybrid” approach to better support student learning.